Welcome to DeepOBS¶

DeepOBS is a benchmarking suite that drastically simplifies, automates and improves the evaluation of deep learning optimizers.

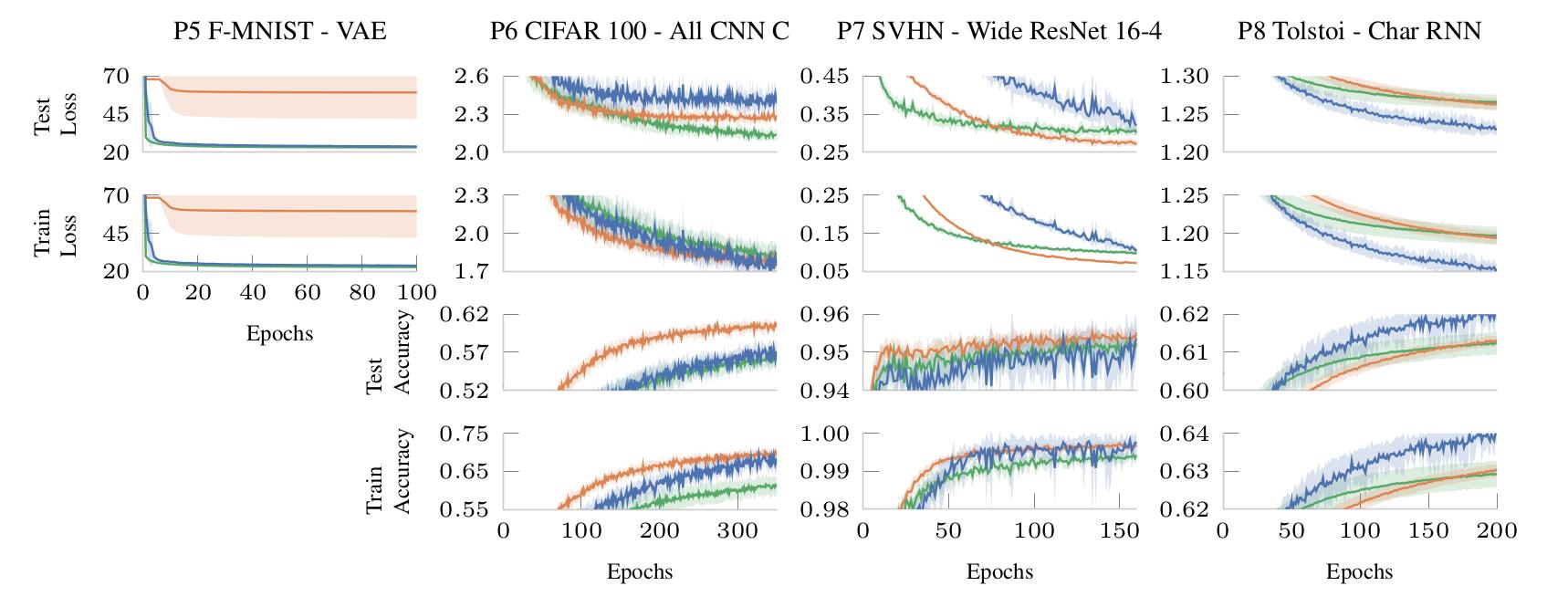

It can evaluate the performance of new optimizers on a variety of real-world test problems and automatically compare them with realistic baselines.

DeepOBS automates several steps when benchmarking deep learning optimizers:

- Downloading and preparing data sets.

- Setting up test problems consisting of contemporary data sets and realistic deep learning architectures.

- Running the optimizers on multiple test problems and logging relevant metrics.

- Reporting and visualization the results of the optimizer benchmark.

The code for the current implementation working with TensorFlow can be found on GitHub.

We are actively working on a PyTorch version and will be releasing it in the next months. In the meantime, PyTorch users can still use parts of DeepOBS such as the data preprocessing scripts or the visualization features.

User Guide

API Reference