Welcome to DeepOBS¶

Warning

This DeepOBS version is under continious development and a beta of DeepOBS 1.2.0.

Many thanks to Aaron Bahde for spearheading the developement of DeepOBS 1.2.0.

DeepOBS is a benchmarking suite that drastically simplifies, automates and improves the evaluation of deep learning optimizers.

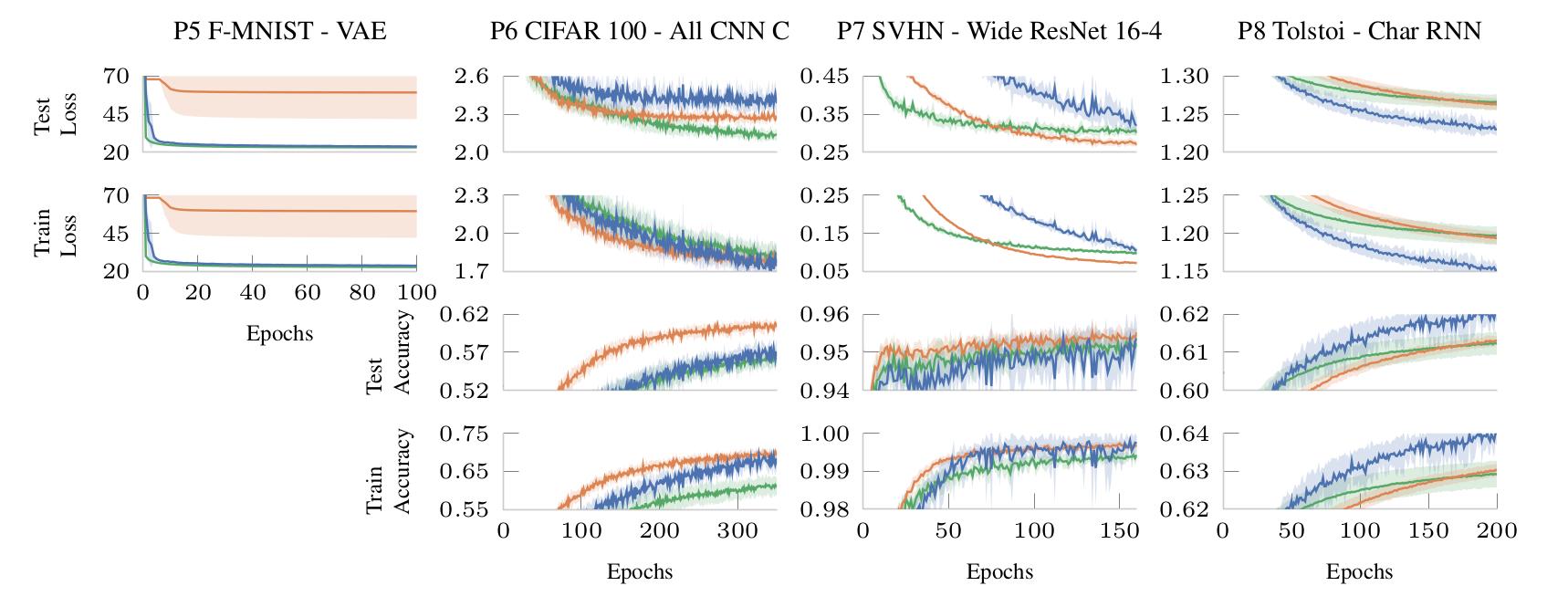

It can evaluate the performance of new optimizers on a variety of real-world test problems and automatically compare them with realistic baselines.

DeepOBS automates several steps when benchmarking deep learning optimizers:

- Downloading and preparing data sets.

- Setting up test problems consisting of contemporary data sets and realistic deep learning architectures.

- Running the optimizers on multiple test problems and logging relevant metrics.

- Automatic tuning of optimizer hyperparameters.

- Reporting and visualization the results of the optimizer benchmark.

The code for the current implementation working with TensorFlow and PyTorch can be found on GitHub.

User Guide